Custom Gradient Boosting with PackBoost

PackBoost is a domain-specific gradient boosting algorithm designed to handle constraints not supported by standard libraries.

Gradient boosting decision trees and aggregation of weak learners.

Design Choices

PackBoost was developed for a public data science competition focused on financial markets. Its design reflects this context:

- Synchronized ensemble feature sampling

- Ensemble of weak learners for robustness

- Feature synchronization to encourage orthogonality

- Era-aware split selection

- Improved robustness across market regimes

- Round-forward sample paths

- Enables massive parallelization

- Preserves orthogonality across boosting rounds

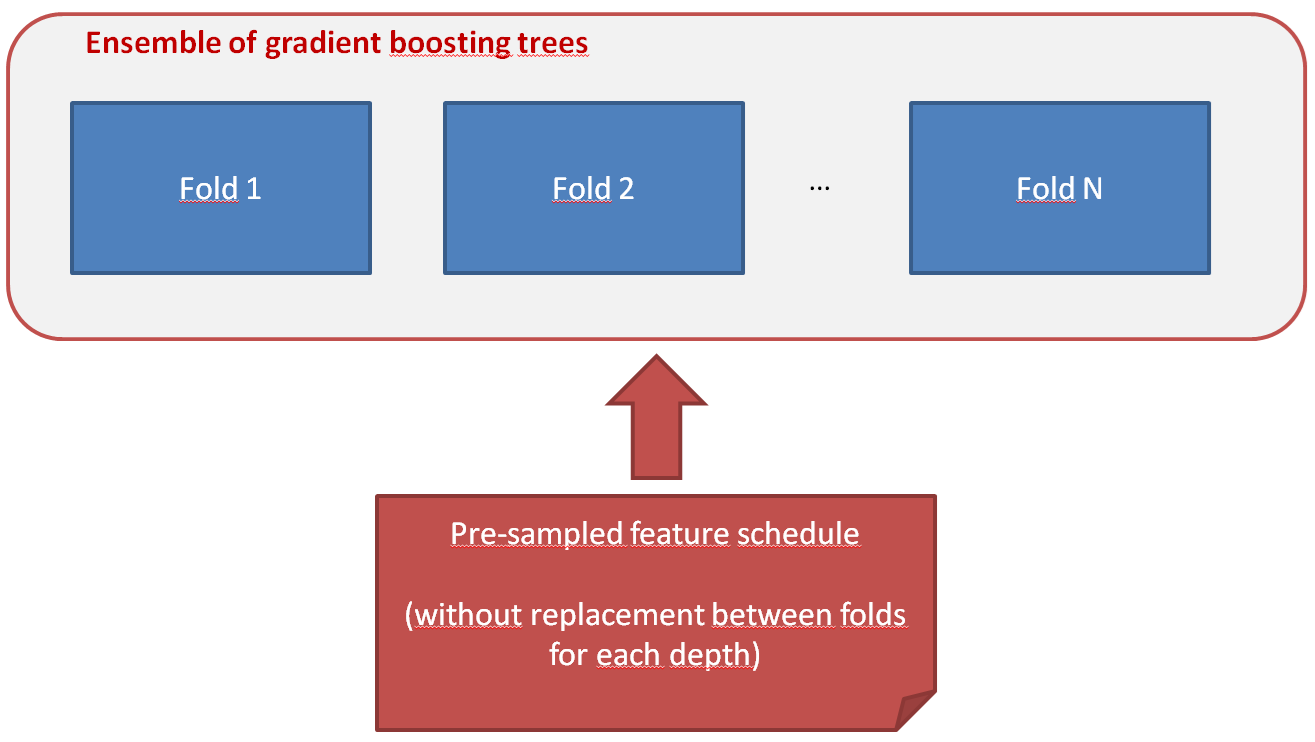

Ensemble Feature Synchronization

For a given round, features are never reused across folds. When

split_feature_candidates << total_features, this enforces approximate orthogonality between ensemble members.

Pre-sampled feature schedule per fold and tree depth.

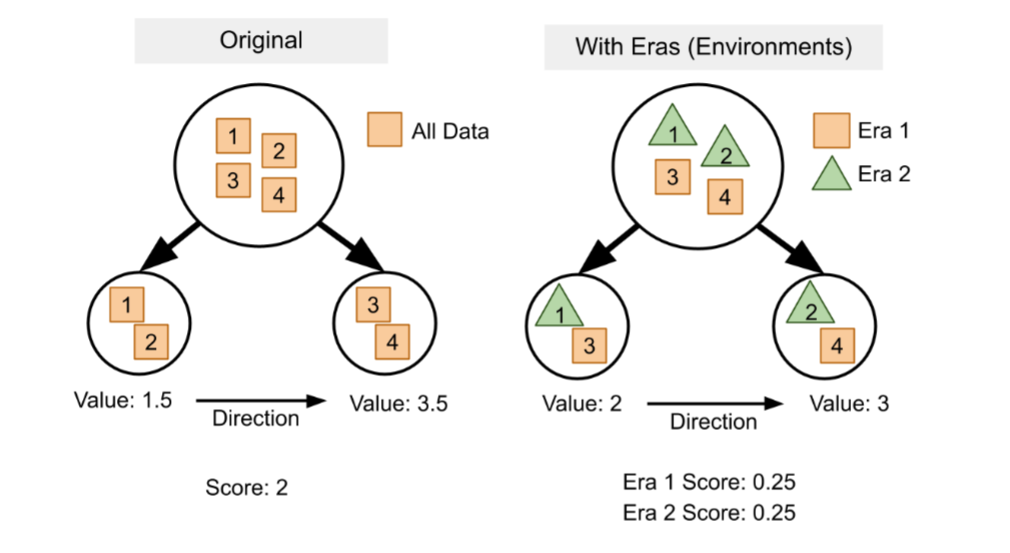

Era-Aware Splitting Criterion

Splits are selected using an era-aware criterion ([reference TBD]). Instead of a single global score, splits are evaluated per era and then aggregated.

Era-level scoring leads to different optimal splits than global criteria.

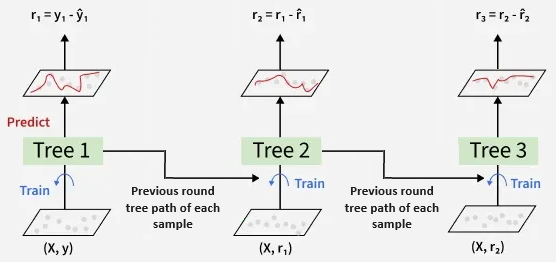

Shared Tree Paths for Parallel Training

Instead of recursive tree growth, PackBoost reuses tree paths from previous rounds. This non-optimal strategy enables large-scale parallelization.

Reusing paths across rounds enables parallel split evaluation.